Legal experts are urging ministers to promptly revise the law to recognize the fallibility of computers, or else face the possibility of another scandal like the Horizon case.

According to English and Welsh legal systems, computers are considered reliable unless proven otherwise. However, opponents of this belief argue that it shifts the responsibility of proof, which is typically placed on the prosecution in criminal proceedings.

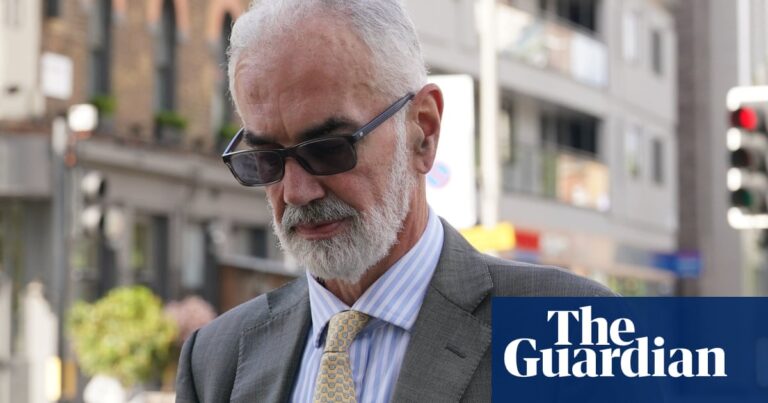

According to Stephen Mason, a legal professional and specialist in digital evidence, the burden of proof lies with the individual claiming that there is an issue with the computer. This responsibility remains even if the accuser possesses the necessary information.

In 2020, the government asked Mason and a group of eight legal and computer professionals to propose changes to the law. This request came after a court decision that went against the Post Office. However, the suggestions they provided were not implemented.

Mason and his team had been expressing concern about the assumption since 2009. He believes that the Post Office would not have made as much progress if the presumption had not been in place.

The basis for the belief that computers are trustworthy comes from an established law that states mechanical devices are presumed to be functioning unless proven otherwise. This means that if a police officer uses the time from their watch as evidence, the defendant cannot demand that a watch expert be brought in to explain how watches work.

During a certain time frame, computers in England and Wales were not granted legal protection. In 1984, a law passed by parliament stated that computer evidence could only be used if it could be proven that the computer was functioning correctly. However, this law was revoked in 1999, shortly before the initial trials of the Horizon system took place.

Due to the hallucinatory evidence provided by the Horizon system, post office operators were found guilty of stealing money without any solid proof against them. As a result, they were unable to challenge the system in court and were almost guaranteed to suffer loss.

English common law has a significant impact globally, resulting in a widespread belief in the presumption of reliability. Mason refers to cases from New Zealand, Singapore, and the US that upheld this standard, with only one notable exception.

In 2007, a Toyota Camry was involved in an incident on an Oklahoma highway where it suddenly accelerated and became unresponsive to controls. This resulted in a crash that caused the death of a woman and serious injuries to a second individual. At the time, there were numerous reports of Toyota vehicles experiencing uncontrollable acceleration, but the evidence from panicked messages from the passengers in this particular incident was one of the few pieces of concrete proof pointing towards an electronic malfunction.

According to one expert witness, even a small error in computer code can lead to a loss of control over the engine speed in actual vehicles. This software malfunction is not always detected by safety measures. As a result, Toyota settled the case to avoid being penalized, following a $3 million compensation verdict from a jury in favor of two women.

According to Noah Waisberg, the co-founder and CEO of Zuva, the development of AI technology has highlighted the need to review legal regulations. He explains that while a computer typically follows instructions in a rules-based system, there is still a possibility of errors occurring. Therefore, it is important to recognize that no computer program can be guaranteed to be completely free of mistakes.

Bypass the promotion for the newsletter.

after newsletter promotion

Machine learning systems do not operate in this manner. They rely on probabilities and should not be relied upon to consistently behave – only to function according to their projected level of accuracy.

Waisberg stated: “It may be difficult to assert that they are dependable enough to justify a criminal conviction.”

According to James Christie, a software consultant and co-author of the recommendations for a law update, the adjustments may occur in two phases. The initial stage would oblige evidence providers to demonstrate to the court that they have handled and maintained their systems in a responsible manner, as well as reveal any known bugs.

“If the provider cannot …, it is their responsibility to demonstrate to the court why these failures or issues do not impact the credibility of the evidence and why it should still be deemed reliable.”

The Ministry of Justice has been reached out to for a statement.

Source: theguardian.com